The future of HPC

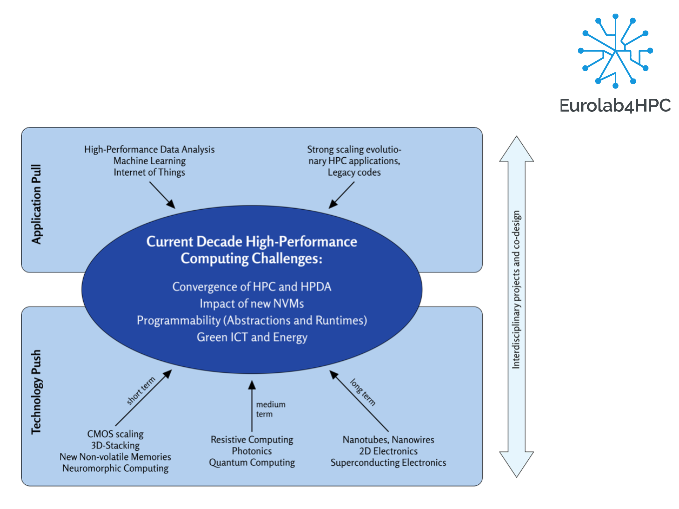

In March 2020, the Eurolab4HPC project released its 2020 update of the “EuroLab4HPC Long-Term Vision on High-Performance Computing”. This document aims to help researchers identify the main research challenges and open questions in hardware/software technologies for the post-exascale period 2023 to 2030. Some examples include the impact of the end of complementary metal–oxide–semiconductor (CMOS) scaling, which drives an explosion in new technologies. Whichever technologies eventually triumph, there is a trend towards more complex systems, with future system architectures including heterogeneous accelerators, in- and near-memory computing and storage-class memories, and new underlying technologies such as photonics, graphene, resistive computing, and quantum computing. Much more is needed, of course, than the basic technologies. In order to build a real, scalable quantum computer, for instance, it will be necessary to develop a full stack, as for classical computers, encompassing high-level languages, compilers, microarchitectures and, until large numbers of physical qubits are possible, simulators. Another challenge is finding an alternative to the Von Neumann architecture, which has served as the execution model for more than five decades. But energy consumption and demands for more and more memory bandwidth mean that the distance between memory and processing must be reduced. Solutions might be found in research on materials for new kinds of in-memory computing, as well as architectures, compilers and tools. Even the best computer programmers will not be able to keep up with hiding or mitigating complex, heterogeneous and diverse hardware. Single-source programming models need to be coupled with intelligence across the whole programming environment, since manual optimization of data layout, placement, and caching in highly complex software will become uneconomic and time-consuming. Performance tools to diagnose performance bottlenecks or spot anomalous behaviour, and map back to the source code, will need techniques from data mining, clustering and structure detection. Nevertheless, with scientific codebases having very long lifetimes, on the order of decades, today’s abstractions will continue to evolve incrementally well beyond 2030. The document discusses many other important topics, such as the convergence of HPC and cloud computing, the impact of heterogeneous accelerator interfaces (CCIX, Gen-Z, CAPI, etc.), and the integration of network and storage. Find out more about these technologies and the main research challenges and open questions in the EuroLab4HPC Long-Term Vision document on High-Performance Computing from the EuroLab4HPC website at www.eurolab4hpc.eu