Helping robots get to grips with the real world

Although robots are an increasingly common presence in our lives, they are still largely restricted to controlled environments such as assembly lines, or applications where they only need to avoid physical objects, rather than interact with them. “The key issue we face today in robotics is when you want them to walk, climb and manipulate objects,” explains project coordinator Ludovic Righetti, senior researcher at the Max Planck Institute for Intelligent Systems(opens in new window) and associate professor at New York University(opens in new window). “Managing physical interaction is an unsolved problem in robotics, the most difficult issue we’re facing. We can develop ad hoc algorithms for a few sensors. But a general theory for any robots, nobody knows how to do that.”

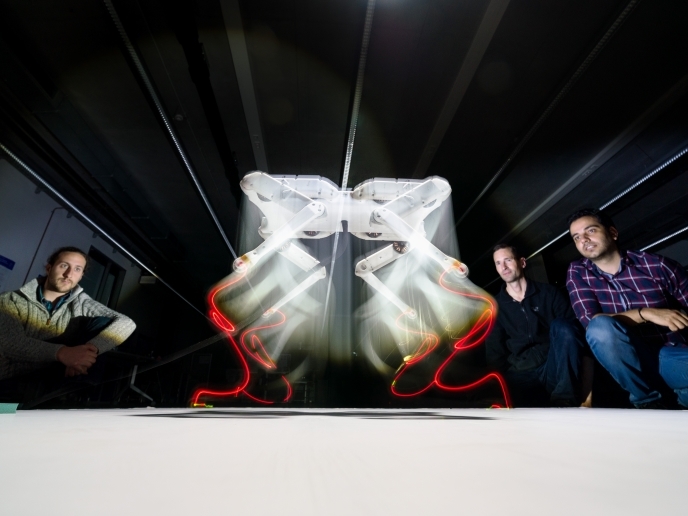

Machines in motion

The EU-funded CONT-ACT project aimed to develop fundamental knowledge and generic algorithms that would address this issue. The project had two pillars: the first was to use an understanding of physics to derive the basic principles of physical interaction. The second was data from real robot experiments to improve the behaviour of this system. Righetti’s group had already developed a generic method to control legged robots, teaching the machines how to adjust the force applied by their motors to keep their balance. To advance this, they had to solve the same problem for a robot in motion. “This is hard to solve in real time,” says Righetti. “Whatever we’re doing, we need to solve it in a few tens or hundreds of milliseconds.” By breaking down the complexity of the problem, Righetti and his team were able to create a set of algorithms that would let the robot move its entire body. “We designed a controller that allows the robot to respond to changes in the environment,” he adds. “So, walking up uneven stairs, or if someone pushes it, we came up with algorithms that deal with that.” The team also developed machine learning techniques that allow the robots to integrate information from additional sensors. “We have robots with tactile surfaces that can detect contact, and measure force and pressure. But if you look at algorithms that control how these robots grasp and manipulate objects, usually they do not use this information,” says Righetti.

Virtual space

Combining this data is crucial to building a generic handling algorithm. “If you look at the raw data, when you change one slight thing, like the shape or colour of an object, the readings from sensors will be very different,” notes Righetti. “But they do describe something similar.” By mapping these inputs to a virtual space, robots can learn general models of their environment and acquire behaviours that enable them to handle similar unseen objects and environments, rather than having to be taught how to interact with each variation of it. Righetti admits that, ultimately, he was not able to crack the biggest unsolved problem in robotics: finding algorithms to make robots truly autonomous. But he says his team was able to make significant progress toward that goal. “We now have algorithms that are pretty mature, and are among the fastest and most reliable that exist today.” He adds that making further developments to robotic movement and physical interaction with objects and the environment is likely to dominate his research for the next few years: “The story is far from finished. We keep making a lot of progress and we’ll continue our goal of finding a fundamental set of algorithms."