Smart robots master the art of gripping

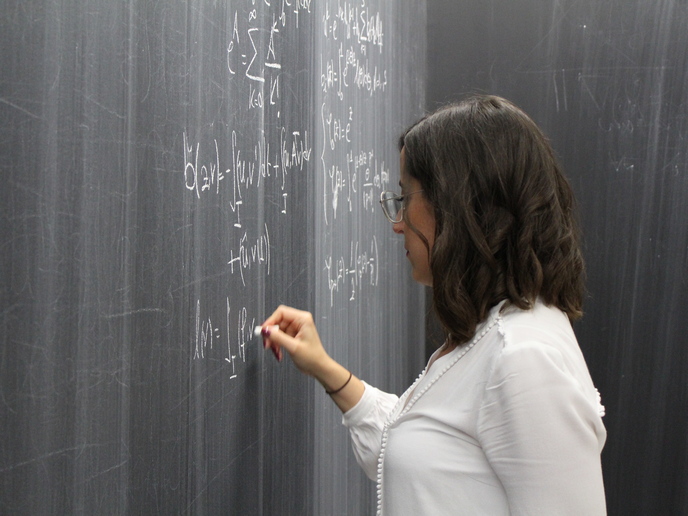

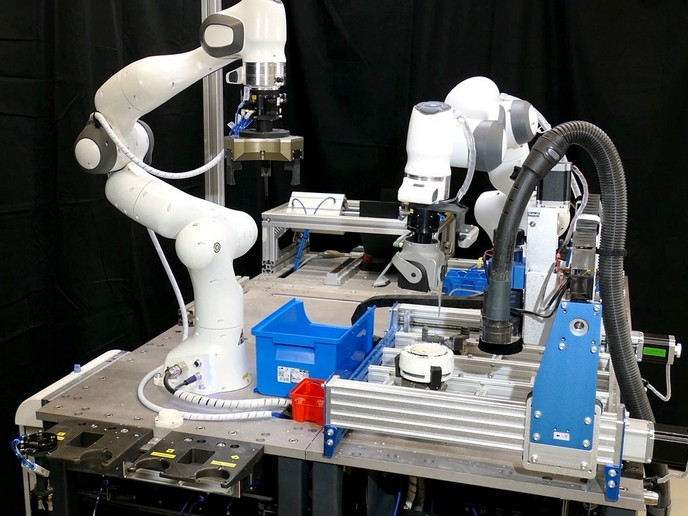

During the last few decades, humans have increasingly relied on robots to perform a variety of tasks. Robots have been extensively used in production lines for tasks such as car assembly. However, despite their widespread success, automatic assembly still suffers from the time it takes to programme and reprogramme robots. To address this challenge, researchers established the EU-funded project SARAFun(opens in new window). The project’s new solution aims to empower industrial robots with perception, learning and reasoning abilities, providing tools to automate robot programme generation and design task-specific hardware. Learning by watching human behaviour “The system is built around the concept of a robot capable of learning and executing assembly tasks such as insertion or folding demonstrated by a human instructor,” explains Dr Ioannis Mariolis. After analysing the demonstration task, the robot generates and executes its own assembly programme. Based on the human instructor’s feedback as well as sensory feedback from vision, force and tactile sensors, the robot can progressively improve its performance in terms of speed and robustness. SARAFun’s ambitious aim was to develop a system capable of executing new assembly tasks in less than a day. “Maintaining a humanlike reach for assembly of small parts within a very small space is critical to minimising the footprint on the factory floor. It also enables the robot to be installed into work stations currently used only by humans,” adds Dr Mariolis. Implementation of the interface Work heavily relied on smooth and robust user interaction with the system using the human-robot interface. The latter consists of different modules enabling the operator to teach the assembly task to the robot and supervise the learning process. In the teaching phase, the user creates the assembly task by specifying a name, selecting the parts that the robot must assemble, and defining the type of the assembly operation. In the next step, the assembly parts are placed within the camera view and the system is called to identify them. After the parts have been detected, the operator demonstrates the assembly task in front of the camera. The recorded information is then analysed and important frames of the demonstration called key frames are automatically extracted. Upon user confirmation, the system uses the key-frame information that includes the tracked positions of the assembly parts and the instructor’s hands and generates the assembly programme for the robot. Candidate grasps for the assembly parts are proposed along with robotic finger designs for increasing grasp stability. After adjusting the grasping positions and designing appropriate fingers to compensate for the robot’s inferior dexterity with respect to the human hand, the actual fingers are 3D printed and installed into the robot grippers. After loading the programme for execution, the robot motions are generated using the information extracted by the key frames. The assembly operation keeps performing repeatedly until it reaches the desired level of autonomy. Almost zero programming Researchers have successfully completed many demonstrations using ABB's(opens in new window) dual-arm collaborative robot YuMi. Armed with its grippers, the robot uses SARAFun’s design system components – 3D visual sensors, grasp planning, slip detection, motion and force control for both arms and physical human-robot interface – to mimic bimanual tasks demonstrated by the human instructor. “The result is a flexible assembly programme that can adapt to the working environment without specific planning by the user. Compared to state-of-the-art technology, the simple graphical interface is much easier for non-experts to use,” notes Dr Mariolis. Tested on different assembly use cases involving cell-phone parts and emergency stop buttons, the system successfully learned the demonstrated assembly tasks in less than a day. A disruptive game changer, SARAFun’s assembly robot can significantly change industrial manufacturing all over the world and encourage a re-evaluation of assembly manufacturing. Products with short life cycles entail frequent changes of programmes. Unlike today’s robots which know only their nominal task, this smart robot for bimanual assembly of small parts is not limited in its ability to deal with regular changes in the production line.