Big data science researchers will have their day in the cloud

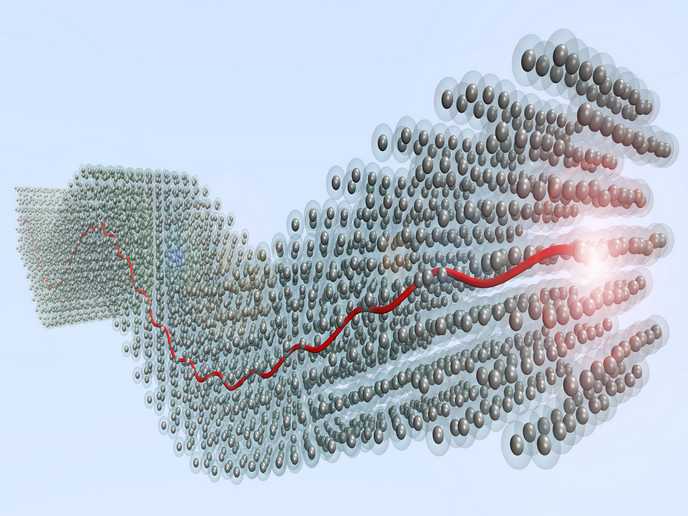

Some branches of science produce colossal amounts of data. For example, particle physics and genomic labs output around a petabyte (PB) of data every day. A petabyte is a thousand terabytes (1 million gigabytes), or roughly a quarter million DVDs. The 10 billion or so photos stored on Facebook amount to approximately 1.8 PB. Most scientific research organisations are unable to accommodate such amounts using their own facilities, and the problem is worsening. A separate problem with current scientific storage is that much of this data are neither published nor publicly available. So, the European Open Science Cloud (EOSC) mandated open science, to be operational by 2020, intended to make scientific results public and sharable. EU-funded projects, including EOSC-hub and EOSCpilot, have collaborated in the development and maintenance of the EOSC portal(opens in new window). The EU-funded HNSciCloud(opens in new window) project is a consortium of commercial cloud service providers and public research organisations tasked with solving the problems facing data-intensive science. Researchers identified a gap in the market, and managed tender applications from providers to build a European high-performance cloud platform for scientific organisations. The idea is similar to ordinary cloud services, but on a gigantic scale and meeting special scientific needs, including EOSC compliance.

Tender and design

The project’s work began with a tender phase, during which the team consulted with providers and users to set requirements. This yielded a shortlist of four consortia. During the subsequent design phase, the selected consortia prepared and submitted their proposals, of which the HNSciCloud evaluation committee selected three to proceed to the prototype and pilot phases. During these latter stages, project researchers tested the platform’s scalability and reliability using realistic scientific cases. First among those use cases was the Worldwide Large Hadron Collider Computing Grid. This is a global collaboration, consisting of 170 computing centres in 42 countries, that processes CERN particle physics data. HNSciCloud testing also involved many other high-demand European scientific research groups. These include PanCancer to analyse 2 000+ whole cancer genomes daily, and the Square Kilometre Array’s low-frequency telescope.

Specialised services

“The prototype and pilot phases successfully showcased the benefits of the hybrid cloud model, and demonstrated that organisations may conveniently surpass their computing infrastructure limitations by adopting specialised commercial cloud services,” says project director Bob Jones. As noted in a project video(opens in new window), it “combines services at the infrastructure as a service level to provide an environment supporting the entire life cycle of science work flows.” Services include compute and storage, transparent access to petabyte-size data sets, network connectivity, federated identity management and innovative payment models. “The result is a hybrid cloud platform, now available to the general scientific community, able to meet the extremely demanding ICT requirements of even the most data-intensive sciences,” adds Jones. HNSciCloud is certified to comply with European standards and legislation in terms of security and data protection, and with the EOSC. The platform is based on commercially supported open-source code that does not require licences. These innovative cloud services provides new ICT capacity that will expand Europe’s research capability.