Research deciphers how our brain uses vision to plan stable grasps

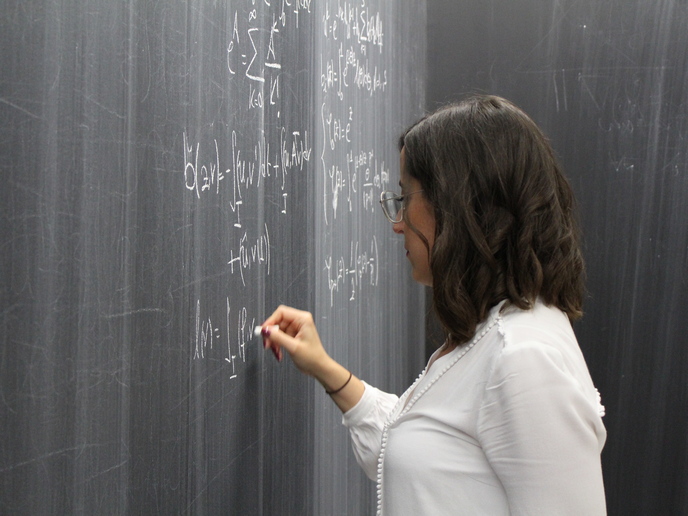

Our sense of sight guides our actions towards objects. For example, if someone were asked to pass the salt, he would first look for it on the table and then grasp it with his hand. How our brain achieves this seemingly trivial behaviour is still far from being understood. For any desired action, be it stirring a cup of coffee or directing a pen between our thumb and index finger over a piece of paper, we move our fingers in subtle ways that allow us to execute stable, comfortable grasps. The brain somehow works out which grasps out of all possible choices are actually going to succeed. “Understanding how we use vision to pick up and interact effectively with arbitrary objects is a long-standing quest in behavioural science. It is also a huge computational challenge for state-of-the-art robotic systems; they seem to have a hard time visually identifying effective grasps in nearly 20 % of the cases(opens in new window),” notes Guido Maiello, coordinator of the Marie-Curie–funded VisualGrasping project.

Unlocking how the brain combines different rules into one movement

“We hypothesised that the brain uses a set of rules to identify successful grasps. These should rely on information about the object 3D shape, orientation and material composition,” adds Maiello. By attaching small markers to the hands of paid volunteers, researchers recorded how they moved their hands while interacting with objects made of different materials. Certain objects were 3D-printed to create complex shapes. The team combined these behavioural observations with computer models that could predict how humans would grasp the objects. Their simulations seemed to perfectly match the experimental results.

Decoding how the brain sees the world in 3D

Going a step further, researchers sought to determine how the brain reconstructs the object 3D shape from the 2D images reaching our eyes. Maiello states: “The third dimension humans perceive with their vision comes from the brain combining disparate (left-hand and right-hand) images into a whole – a phenomenon called stereopsis. However, the structure of our retinae and the information processing abilities of our brain lead to imperfect reconstructions of our 3D environment.” To further investigate these limitations, researchers asked participants to view stereo images and report on the perceived depth. Results were found consistent with theoretical modelling approaches attempting to describe how our brain extracts and processes depth from 2D images. “Our sophisticated models could be the building blocks for constructing a comprehensive theory of how humans use vision to guide hand motor skills. In particular, we aim to throw further light on how the visual inputs picked up and converted into electrochemical signals in the retinae are fed into and processed by the complex network of nerve cells in the visual and motor cortices. These neural computations account for the explicit motor commands that govern fine reaching and grasping movements,” explains Maiello. Project insights into how humans employ vision to plan grasps could have far-reaching implications for different engineering applications, such as the design of more effective robotic actuators, and of more immersive and user-friendly augmented reality technologies. In the medicine field, they could yield a dramatic degree of control in neurological disorders and neurorehabilitation, and apparently, help uncover visual loss mechanisms.