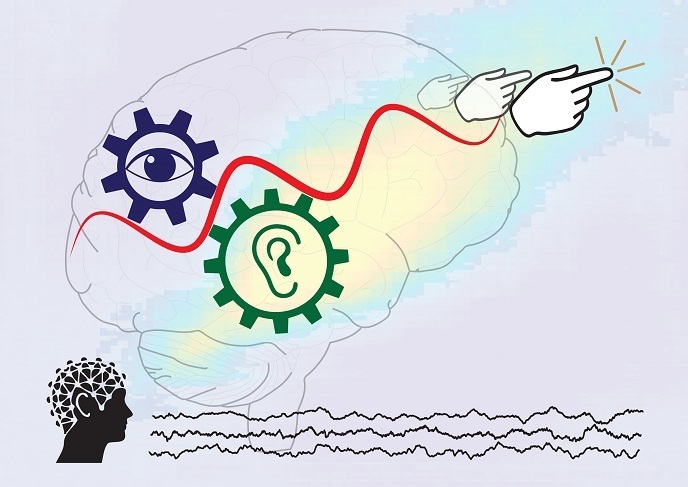

Making sense of the sights and sounds of life for multiple senses at once

Combining information across multiple senses helps in choosing and generating the relevant action. For instance, “if something is very prominent in our vision, we would not necessarily need the multisensory mechanism to decide whether to take action. But, if we are walking in the woods at dusk, a faint sound of howl may help to perceive a barely visible dog from the rising mist. In such a context, linking the two pieces of information will be crucial to decide whether we need to do something,” notes Mercier, coordinator of the EU-funded MIMe project.

From individual to multimodal

Funded by the Marie Skłodowska-Curie programme, MIMe examined this complex interplay between senses and decision making. Perceptual decision making – the process that guides our behaviour – can be divided into a sensory encoding stage (where a sensory signal is encoded in the related sensory cortex) and a decision formation stage. “We aimed to figure out whether sensory cues from different senses are intertwined from the moment they enter the brain, namely in the encoding stage, or during the decision formation stage. Furthermore, we sought to identify the neural mechanisms and architecture underlying multisensory convergence,” explains Mercier.

An orchestration of brain oscillations

New findings that recently came into the spotlight suggest that information transfer in brain circuits relies on neural oscillations(opens in new window) which reflect variations in neural activity. When two neuron groups oscillate in phase, they are more likely to exchange information. While further research is needed on the topic, the findings unravel a potential neuronal mechanism the brain may use to associate sensory inputs to create a multimodal construct. “MIMe examined whether multimodal association relies on the phase synchronisation between distant neural network nodes,” adds Mercier.

‘Shaping’ what we hear

To achieve their goals, project researchers first conducted a study on patients implanted with intracranial electrodes for clinical purposes. The focus was on recording intracranial neural oscillations while participants were exposed to illusory contours accompanied by a sound. Interestingly enough, researchers observed great synchronisation in the oscillatory activity of the auditory and visual cortices. “This communication between distant neuronal populations represents the encoding of a multimodal object: The auditory cues influenced what patients thought they were seeing, namely the shape formed by the illusory contours,” notes Mercier.

Multisensory integration is pervasive in brain

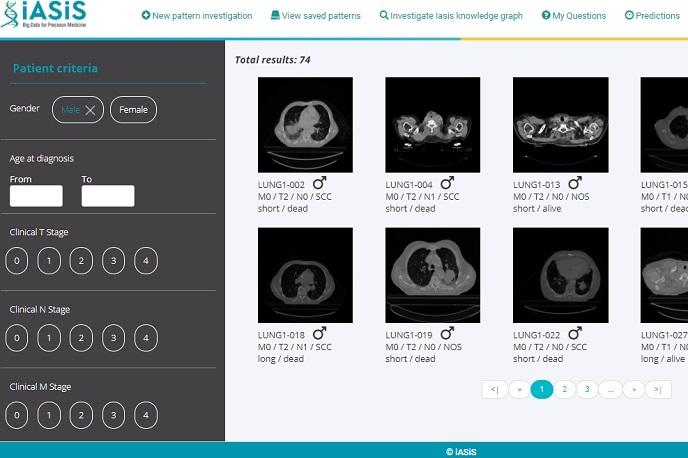

In another study, project researchers exposed healthy participants to a dynamic sequence of audio and visual signals. The goal was to examine how they perform complex computations and react to particular combinations of cues. Using machine learning methods to analyse the brain electrical activity, researchers reported that multisensory integration(opens in new window) accelerated the brain dynamics during the sensory encoding stage and during the stage the participants decided on their response. MIMe indicated that multisensory integration is pervasive in the human brain and completes different processes along the processing hierarchy. “Thorough understanding of the neural dynamic at play between the senses during the formation of a decision will shed new light on difficulties encountered by some of the population, such as people with autistic traits,” concludes Mercier.