Developing technologies, but not forgetting ethics

Smart information systems (SISs) are changing our lives. Evolving from AI and Big Data analytics, these systems contribute to society in significant ways, ranging from disease prevention to crime reduction. Unfortunately, they are also used in more negative ways, such as providing beauty scores to match equally attractive people in dating apps or using people’s photos to predict their body mass index (BMI) for health insurance policies. To counteract the damaging effect that such uses have on society and its values, the EU-funded project SHERPA is investigating the different ways in which SISs affect ethical and human rights issues.

Not taking facial recognition at face value

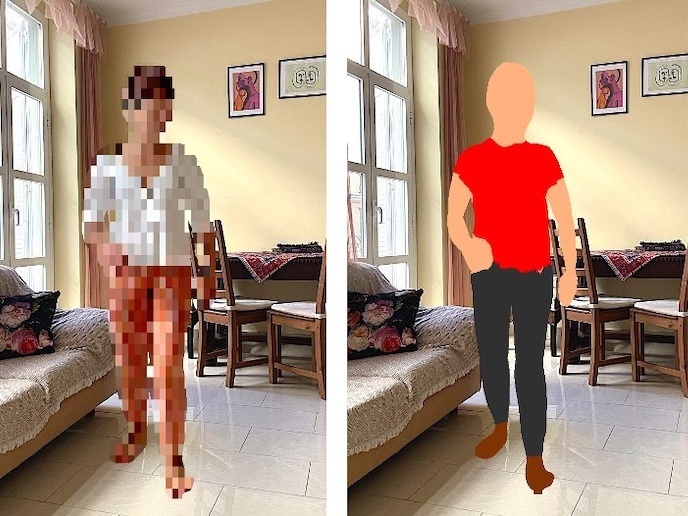

Hoping to make people question the reliability of facial recognition algorithms, Tijmen Schep, an artist who is part of the SHERPA consortium, created an interactive documentary titled ‘How Normal Am I’(opens in new window). Taking the form of a test, the documentary shows you how facial recognition algorithms are used to judge you. In a few short minutes, your face is analysed and rated in terms of age, beauty and gender. It’s also used to assess your emotional state and estimate your BMI and life expectancy. “As face recognition technology moves into our daily lives, it can create this subtle but pervasive feeling of being watched and judged all the time,” Schep commented in the documentary. “You might feel more pressure to behave ‘normally’, which for an algorithm means being more average. That’s why we have to protect our human right to privacy, which is essentially our right to be different. You could say that privacy is a right to be imperfect.” The unreliability of facial recognition algorithms is highlighted in the documentary, when it becomes apparent that it’s quite easy to manipulate the scores. For example, moving your head up and down alters the age prediction and simply raising your eyebrows can result in a lower BMI score. In addition, the algorithm’s assessments of beauty are biased since they are based on the beauty scores provided by Chinese students only. The AI models used in the documentary run in the viewer’s own browser, so no personal data is sent to the cloud.

Privacy at home

Tijmen Schep served as lead designer for another SHERPA-supported system that makes us ponder what a smart home would look like if privacy was the top priority. Called Candle(opens in new window), the new privacy-friendly smart home system was designed to store all data inside the home and doesn’t need an internet connection to run. This is because, unlike other smart home systems, it comes with completely local voice control. Candle’s features include temperature, humidity, CO2 level, dust and electricity-use sensors as well as alarms and smart locks. Aiming to rebuild people’s trust in SISs that has been damaged following various privacy violation scandals, Candle’s creators have made the source code freely available. “Our goal is to accelerate the industry towards privacy friendly products by showing innovative examples of what these products could look like,” stated Schep on the Candle website. SHERPA (Shaping the ethical dimensions of smart information systems (SIS) – a European perspective) aims to find sustainable solutions that benefit not only the innovators of technologies but also the society that uses them. The project ends in October 2021. For more information, please see: SHERPA project website(opens in new window)