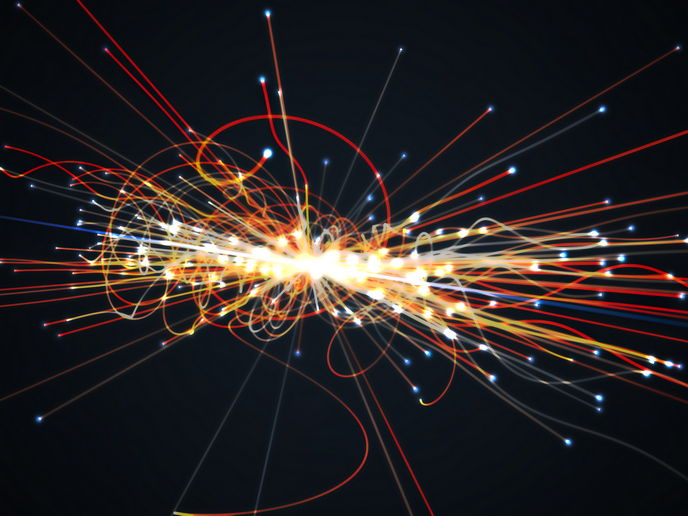

Data extraction — Large Hadron Collider

Vast quantities of collisions and interactions take place in the Large Hadron Collider (LHC) at the European organisation for nuclear research (also called CERN) in Switzerland. Data analysis of these events is crucial for the discovery of new particles. PDFs are tools used to encode the dynamics that determine how the energy of a proton is split among its constituents in each collision. These PDFs have to be extracted from the experimental data. The EU-funded project, 'Precision parton distribution for new physics discoveries at the Large Hadron Collider' (DISCOVERY@LHC), has devised a novel approach to doing so. The new method uses artificial neural networks, machine learning techniques and genetics algorithms to extract the PDFs from experimental data. This obviates the need for the imposition of a prior theory. The project researcher published the first PDF set to include the direct constraints from the LHC data, denoted Neural Network Parton Distribution Functions NNPDF2.3. Other scientists working at the LHC have increasingly started to use these NNPDF sets. Later in 2013, the researcher presented the first NNPDF set with quantum electrodynamics effects. The project has achieved its principal goal of providing a new generation of parton distributions for use in the study of LHC phenomena.